Cancer and diabetes are two major contributors to mortality in patients, ranking among the top 10 causes of death worldwide by the World Health Organization (WHO). Both diseases pose a significant challenge to public health systems, representing a complex challenge that requires comprehensive strategies to reduce their impact on global mortality rates. Metformin, among other drugs, is the main first-line prescribed medication for the management of type 2 diabetes, and it is known for its efficacy and tolerability. Yet, metformin has gained attention for its role in other contexts, including cancer and cardiovascular disease.

Prostate cancer is a significant global health concern and a leading cause of cancer-related deaths among men. While treatments like radical prostatectomy (RP) and radiotherapy are effective for many patients, for others, the disease progresses leading to metastasis, which can be difficult to treat and is often fatal. Previous studies have pointed to metformin’s potential role in prostate cancer, but conflicting findings have led to an inconclusive verdict on the use of metformin for this disease. A recent breakthrough study headed by Alexandros Papachristodoulou from Dr. Abate-Shen’s group in the department of Molecular Pharmacology and Therapeutics at Columbia University Irving Medical Center, has shed light on a potential new avenue for the use of an anti-diabetic drug in the treatment of prostate cancer.

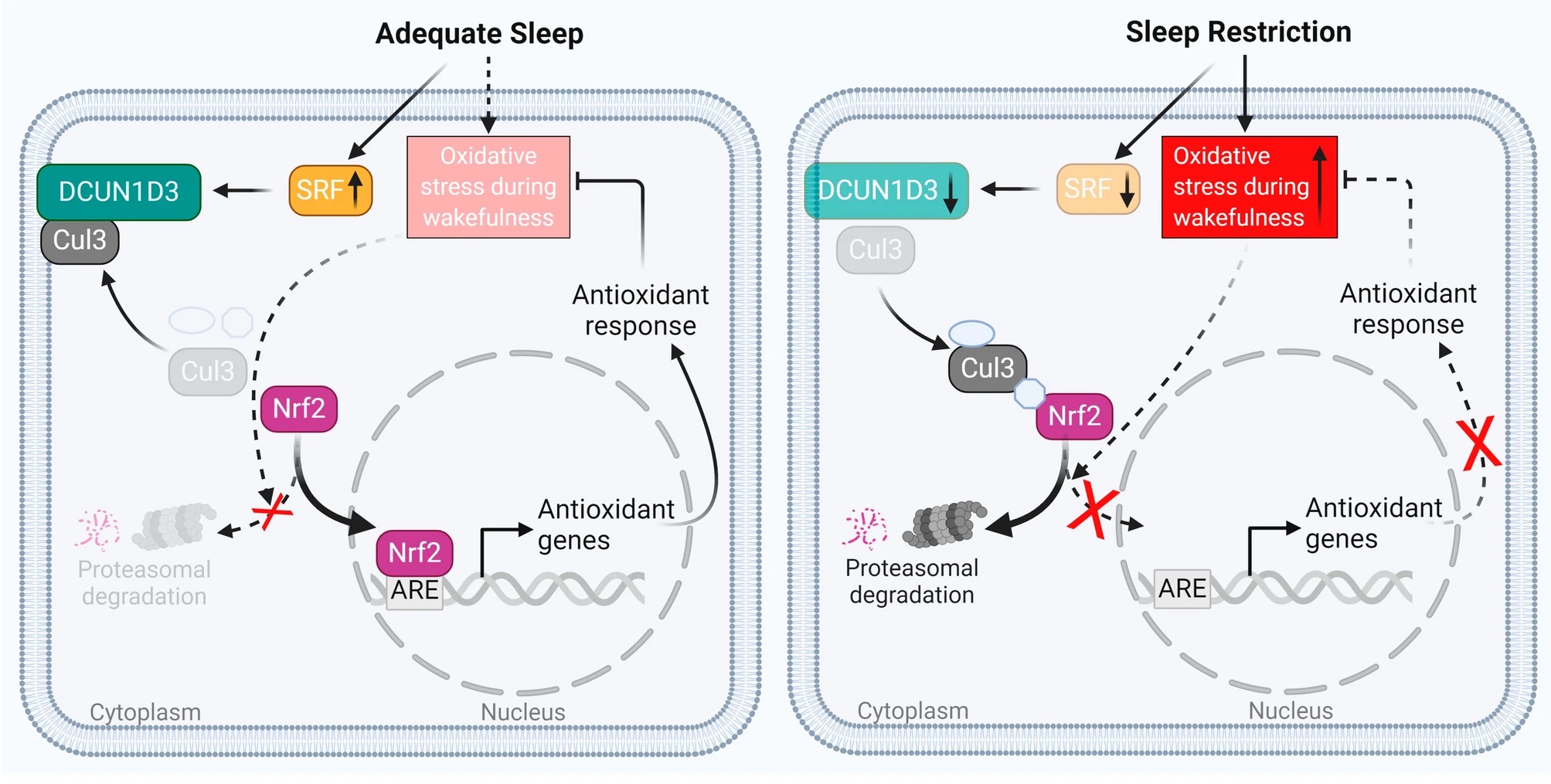

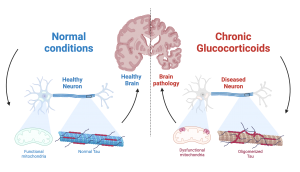

The prostate gland is susceptible to inflammation and oxidative stress, both of which can accelerate prostate cancer progression. Interestingly, oxidative stress influences the behavior of NKX3.1, a gene that plays a crucial role in protecting the prostate epithelium from cancer-related stress. In their work, Dr. Papachristodoulou and colleagues examine the interplay between NKX3.1 and the widely used diabetes drug metformin. The interplay between NKX3.1 and metformin are found in the mitochondria. NKX3.1 helps protect against harmful free radicals (unstable molecules that can damage cells by stealing electrons from other molecules) and supports normal mitochondrial function, while metformin has a known ability to regulate mitochondrial function and reduce oxidative stress.

The study published in 2024 used a comprehensive number of strategies to investigate its hypothesis, including in vitro work with the use of human prostate cancer cell lines, in vivo work using mouse models, and analysis of retrospective cohorts of patients. The authors show an impressive reduction of tumor size in mice under oxidative stress, by exposure to the herbicide Paraquat, and treated with Metformin. This difference was only seen if tumors did not express NKX3.1. They show that metformin treatment can fully rescue mitochondrial function that had been lost upon oxidative stress in prostate cancer cells lacking NKX3.1, pointing to a possible molecular mechanism for the effects seen in mice.

Moreover, the study analyzed the biochemical recurrence (BCR)-free survival, evaluated by the blood levels of PSA, the antigen used to diagnose and surveil prostate cancer in patients. This parameter is used in the clinic to show the percentage of patients that remain disease-free after RP over time. Utilizing data from two cohorts of patients, the researchers showed that those expressing low levels of NKX3.1 and taking metformin had a much higher rate of BCR-free survival compared to patients not taking the medication. The group of patients expressing high levels of NKX3.1 showed no difference in BCR-free survival when taking or not the drug. In addition, when the disease progression of a group of prostate cancer patients that have been followed up for up to 10 years was investigated, the authors observed that among the patients with low-NKX3.1, all patients treated with metformin evolved to have their disease classified more favorably during follow-up, while most patients not exposed to the medication had a worse development of the disease.

These findings open exciting possibilities for personalized treatment strategies for prostate cancer. It offers hope for a future where precision medicine plays a pivotal role in combating this disease and improving patient outcomes. By identifying patients with low NKX3.1 expression levels, clinicians may be able to tailor metformin therapy to those who are most likely to benefit, potentially improving patients’ quality of life and extending survival rates. While further research and clinical trials are needed to validate these findings, this study represents a significant step forward in understanding the biology of prostate cancer and exploring novel therapeutic avenues.

Reviewed by: Trang Nguyen, Erin Cullen

Figure 1: The number of infections in the households is closely followed by the number of hospitalizations reported. The blue line is a measure of the infection rate of a household and the gray region around it is the variation in that rate.

Figure 1: The number of infections in the households is closely followed by the number of hospitalizations reported. The blue line is a measure of the infection rate of a household and the gray region around it is the variation in that rate. Figure 2: All possibilities of transmission among the three age groups were tested in the full within household transmission model.

Figure 2: All possibilities of transmission among the three age groups were tested in the full within household transmission model.